Marta R. Stoeckel

Physics teacher and STEM education PhD student into student thinking @MartaStoeckel

Homepage: https://mrstoeckel.wordpress.com

Pivot Interactives for Make-Up Labs

Posted in Uncategorized on January 21, 2019

This year, I’ve been able to pilot some of the new Pivot Interactives chemistry activities in my Chemistry Essentials course as part of their chemistry fellowship program. There is a much higher absence rate in Chemistry Essentials than in our other chemistry courses and one of the challenges I’ve been able to tackle with Pivot Interactives has been finding an approach for make-up labs that balances equity with a meaningful lab experience.

First, a little background on the course. My district offers four different chemistry courses, and Chemistry Essentials is designed to meet the minimum graduation requirements. Many of my students have seen limited success either in science in particular or in school in general and one of my challenges as a teacher is to make sure my students see my class as an opportunity to change the patterns they’ve experienced in other courses.

In my department, the standard approach when a student is absent from a lab has been to have them come in before or after school to complete it. The trick is many of the same issues that keep a student from coming to class, such as obligations outside of school or transportation issues, can also make it difficult for them to come in outside of the school day. Even if I’m willing to bend for a student who talks to me, how many never do because they see coming in outside of school as just one more immovable barrier they face? This is doubly frustrating to students who have a study hall or similar space in the school day where they could make up the lab, but the lack of available space or staff to monitor lab safety mean I can’t give students that opportunity.

My go-to has been to provide a make-up version of the lab with the data already filled in. While it gets away from requiring students to come in outside the school day, the data often feels like meaningless numbers when students don’t have any connection to how it was collected. Students also miss out on a lot of science practices, such as designing the experiment, using the necessary tools accurately, and the countless decisions that come with collecting your own data. While I think a student can make progress on these skills missing a lab here or there, a student who is gone frequently can easily miss out on a crucial part of the course.

Pivot Interactives has allowed me to give students something in-between these two approaches. While it can’t completely replace the kinesthetic experiences that happen in an apparatus-based lab, students still can make qualitative visual observations and develop a clear understanding of where the measurements come from since they are seeing the experiment and takin the data themselves. I can also easily write a make-up version of the lab that includes similar experimental design and data collection decisions that students had to make in the classroom. At the same time, students can complete the lab when and where it works for them, rather than having to make a small window of time work. As a result, many of this year’s make-up labs have felt more to students like an actual lab experience rather than a box to check using disembodied data.

Where Does the Energy Go?: Using Evidence-Based Reasoning to Connect Energy and Motion

Posted in Uncategorized on January 2, 2018

This post appears as an article in the January 2018 issue of The Science Teacher.

Stoeckel, M. (2018). Where does the energy go?: Using evidence-based reasoning to connect energy and motion. The Science Teacher, 85(1), 19-25.

Defining Electric Potential Difference by Moving a Multimeter’s Ground Probe

Posted in Uncategorized on December 29, 2017

This post appears as an article in the January 2018 issue of The Physics Teacher.

Stoeckel, M. (2018). Moving multimeter ground to define electric potential difference. The Physics Teacher, 54(24), 24-25. https://doi.org/10.1119/1.5018683

Making the Move to Standards-Based Grading

Posted in Uncategorized on June 9, 2015

This spring, I’ve spent a lot of time analyzing how the year went and trying to identify my biggest frustrations. My goal isn’t to wallow in negativity; I’m much more interested in figuring out what I can do differently next year to reduce or eliminate those frustrations. As I reflected on the year, I identified my two biggest frustrations:

- My students, at least near the start of the year, are very focused on points and this makes it difficult for them to take risks or try something they don’t have step-by-step directions for. This isn’t a surprise since most of my students are 12th graders who’ve done very well in school by focusing on points. While most students got comfortable not always knowing the answer immediately by the end of the year, I’d like to make that transition faster and less painful.

- Many of my students did a brain dump after each test and at the end of each term. I quickly found that when I wanted students to build on concepts from a previous unit or see connections to a topic from last trimester, I had to build in time to review the earlier concept. Like the focus on points, this serves students very well in most classes, including ones I’ve taught, and the majority of students eventually made the necessary shifts, but I’d like to help them make the jump much sooner.

Both of these frustrations are promoted by my grading system. I’ve used a fairly traditional gradebook where I record scores for selected labs and problem sets in one category and scores for large unit tests in a separate category (with a larger weight). Of course when my grading system is built on accruing points students will focus on points! Of course when we have a unit test on some arbitrary date, then move on to a brand new topic with its own big test students will mentally move on, as well! Clearly, it is time for me to take a new approach for grading.

I decided I dive into standards-based grading (SBG). The key idea is that instead of receiving scores on specific assignments (such as unit 1 test or chapter 12 test), students receive scores on specific objectives. This steers the focus away from points in the traditional sense and towards what students truly need to know. Another key feature is that students have the opportunity to reassess standards, usually with the new score replacing the old one. This dramatically lowers the stakes for students. They can take a risk, trying a new approach to a problem or a lab, knowing that if they fail, they can always try again. In addition, students can’t get away with forgetting what they learned since every standard will be assessed multiple times. In many cases, teachers record only the most recent score for a standard, even if it goes down, with the goal of making a student’s final grade represent their knowledge and skills at the end of the course.

This is also good timing for a shift in my grading practices. My building has had a group of teachers studying the issue of grading for a few years and they have arrived at a several grading practices that every teacher in the building will need to follow next year, which means I’ll be making some changes no matter what, so I may as well make some big ones. The first task, however, is to make sure I see how I can fit SBG into next year’s requirements.

Requirement 1: Grades will have three weighted categories: summative (75%), formative (15%), and cumulative final (10%).

A major tenet of SBG is that students should have the opportunity to practice and master content without being penalized for mistakes, so the formative category isn’t in-line with SBG, but I think I know how I’d like to approach this requirement. The summative category is where I’ll place the course objectives. To keep things simple, I’ll update scores on each objective every time it is assessed so that only the most recent score affects a student’s grade. The formative category is where I’ll record scores for the formal lab reports I have students write (usually two per trimester). I see the lab reports as addressing overarching skills such as scientific practices and communication that I would like to include in grades, but are much broader than the typical content objective. At this point, I’m comfortable placing the lab reports in the formative category in order to give those skills more weight than a single objectives.

Requirement 2: In-progress and final grades will be reported as a percentage and mapped to a traditional letter grade.

Our gradebook software reports student percentages to two decimal places, a level of precision I don’t think I’m capable of as a grader. But, its what we have and percentages aren’t going away in my district any time soon, so I need to figure out how I’m going to work within those confines. For now, my plan is to simply make each objective an assignment worth whatever maximum I set my scale to. The summative category will then be worth points equal to the number of objectives x maximum possible score on each objective. The software will then take an average that it uses as a student’s grade in the summative category.

The main issue I have with this approach is a student could conceivably get a respectable grade with no progress towards mastery on some objectives (this can happen just as easily in a traditional grading system; its just easier to hide). For this year, I want to keep things simple, so I’m planning to just keep this in the back of my mind; I doubt I’ll see a significant number of students who do well overall, but ignore a few key standards. Down the line, I may try conjunctive SBG where certain standards are required to earn a passing grade. I may also consider giving certain standards more weight in the gradebook either because they are more complex or more crucial to future learning than the other standards.

Requirement 3: Every class will have a culminating activity during the final exam period.

While I haven’t found much on final exams in the SBG materials I’ve read so far, giving significant weight to what a student does during a certain 90 minute period seems to go against much of the thinking behind SBG. Ideally, what I’d like to do is move away from a traditional written exam, where students do an assortment of problems from throughout the trimester, and toward a more authentic assessment. One option would be an open-ended project, such as Casey Rutherford’s final project where students must come up with a physics question, then collect data to answer it. Another option would be to follow my district’s STEM integration efforts and develop an engineering design challenge where students must apply physics to solve some kind of real-world problem. The trick here would be to come up with something where students would truly have to apply their physics knowledge in a meaningful way.

Requirement 4: Scores no lower than 50% will be recorded for any summative assessment students attempt.

I absolutely agree with this requirement; it makes no sense that most grades cover a range of 10 percentage points (less if you count grades with a + or -) while an F covers 60 percentage points. The main trick is what it will look like to follow this guideline using SBG. My plan is to give students a numerical score for each standard, and have the floor at half the points. For example, many teachers who use SBG give their students a 1, 2, or 3 on each standard they attempt. I will probably give a 2, 3, or 4, instead.

Requirement 5: Students will have at least one reassessment opportunity on all summative assessments.

This requirement is very in-line with SBG; the only question is how I want to manage reassessments. In a good physics class, there is some spiraling of content that happens naturally, and I plan to treat that as one option for reassessment. For example, I had some students this year who did poorly on linear constant acceleration, but, by the time we finished projectile motion, were nailing complex problems that used the same skills. In those cases, I would have no problem updating a student’s scores for constant acceleration objectives.

I also want to offer more explicit reassessment opportunities. I am a fan of Sam Shah’s reassessment application and am planning to modify it for out of class reassessment. I really like that he forces students to reflect on what got in their way and to articulate what they’ve done to improve, rather than allowing students to take the all-too-familiar approach of just trying again on the assumption that it will go better.

I’m also considering Kelly O’Shea’s “test menus” for in-class assessments. It sounds fairly easy to manage for a large number of students (which is important, since my average class size will be somewhere above 30 next year) while still providing significant student choice in their assessment.

What’s Next

I feel like I’ve got the broad strokes in place for next year, but there are still a lot of details to work out. My next big task will be to revise my objectives. My district has been using learning targets (a certain flavor of objective) for a few years, but we didn’t have much dedicated time to work on objectives, so mine are, at best, mediocre. If they are going to become the basis of my gradebook, I need to put in the time to write clearer, more precise learning targets.

My other big summer task will be to finalize (at least for now) some of the details for how I want to grade and revise my syllabus accordingly. While I fully expect to revise my syllabus and details of my grading system as the year progresses, I need to have some of the structure worked out before the fall to help students feel some sense of security in this new adventure.

Engineering to Learn Science

Posted in STEM on November 15, 2014

I teach a one-trimester 9th grade course called Engineering & Physical Science. For the engineering standards, I fell into the same kind of engineering projects that I’ve seen many science teachers fall into. My students did a short straw tower project that did an okay job of teaching the nature of engineering standards from the Minnesota Science Standards and was something the students enjoyed, but connected to science concepts at a superficial level, at best. I was well aware that, since students were not able to apply their knowledge in a meaningful way to design their towers, the project was really just tinkering.

This summer, thanks to a combination of my district’s participation in the University of Minnesota EngrTEAMS project and a generous grant from 3M, I was able to not only get some professional development over what good engineering instruction looks like, but I got the significant curriculum writing time and the materials budget that developing more meaningful engineering instruction takes. The past two weeks in my 9th grade classroom, I had the rewarding experience of implementing the unit I developed with a teacher from St. Paul Public Schools and an instructional coach from EngrTEAMS.

The unit began with instruction over Newton’s Laws to prepare students to design a cargo carrier that would protect an egg in a head-on collision after rolling down a ramp, a variation on the classic egg drop project. To keep students focused on the cargo carriers, where they could apply Newton’s Laws most directly, we provided cars the carrier could Velcro to. The cars also had a spot on the front to attach a Vernier Dual-Range Force Sensor to measure the impact force when the vehicle crashed.

Realistically, students could create an effective cargo carrier without knowing anything about Newton’s Laws, so a major instructional task has been to give students a reason to make the connection. Next week, students will be delivering presentations where they make a pitch for their design, which must include references to Newton’s Laws to justify design decisions. To prepare students for this task, I’ve been spending a lot of time going from group to group to ask them to explain what they are doing, and I’m excited about the results. Students who are normally checked out were not only able to articulate connections between Newton’s Laws and their designs, but some even started participating in class discussions intended to extend their understanding to other contexts. Even when I just listened in, rather thank asking about connections to Newton’s Laws, students had a lot of great conversations about how to use Newton’s Laws to improve their design. Next week, students will be preparing presentations intended to serve as a pitch as their design and will have a chance to share what they’ve been thinking with the entire class.

The past week and a half, while students have been designing, building, and testing, my classroom has been chaos, filled with noise and mess and activity. Because that chaos is a result of students who are engaged and excited about their work, I was glad to embrace it. My challenge now is to find ways to bring some of that same energy and ownership into other topics in the 9th grade course.

Free Fall and Assessment

Posted in Physics on September 28, 2014

The bulk of this week was spent wrapping up acceleration by doing some problems with free fall. It took some time, but my students are getting comfortable with graphical solutions instead of more traditional approaches. Students continue to talk about what’s happening in the problem, rather than the formulas, which is great to see. A few kids are trying to memorize formulas, but watching their peers who use the graphs apply what they know to new situations with relative ease has helped convert the memorizers.

This week was also the first test in physics, and a lot of kids “took the bet and lost.” Based on the reading and thinking I’ve been doing about assessment and grades, I’m grading a lot less than in previous years. The trick is, students used to the way most teachers grade translate not graded as not worth doing. Not surprisingly, these students were not prepared for the test. That said, even when I’ve graded almost everything, I’ve had students find ways to copy or otherwise get out of doing the daily work, then have the first test hit them like a truck.

To try and address this problem, I stole an idea from Frank Noschese and have been giving my students weekly, self-graded quizzes. In addition to all the other benefits of frequent, low-stakes assessments, I hoped my students would figure out the benefits of engaging in the daily work early on. It worked for most of my students, and I saw more students digging into their work after the first quiz, but the stakes were too low for others to catch on.

I’m doing a two-stage collaborative exam for this test, so students will have a chance to recover come Monday. I’m looking forward to seeing how that goes. In the future, however, I’d like to work on strategies to get students away from the idea that not graded means not worth doing a lot earlier. I may make those early quizzes worth more points (at least on paper) or split our 1D motion into separate tests over constant velocity and uniform acceleration so that students will be taking the first test a lot sooner.

Graphical Solutions

Posted in Physics on September 21, 2014

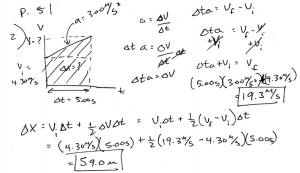

This week, students started using graphical solutions to solve problems for an accelerating object. I was introduced to this approach by Kelly O’Shea and Casey Rutherford at a workshop this summer. Rather than showing my students the usual assortment of kinematic equations, I’ve been hammering what has physical meaning on a graph, especially when on velocity vs. time graphs. When given a word problem, students sketch the motion graphs that describe the problem (especially the velocity vs. time graph) and use it as a tool to solve for the unknown information. I didn’t get a chance to snap a picture of a student sample, but here’s a problem I did.

I really like this approach so far. When I’ve listened in while students were working problems, I hear very different conversations than in the past. I used to hear a lot of talk about the formulas with students focused on either formula hunting or trying to figure out hard and fast rules for when to use each formula. This week, I heard discussions about what the objects in the problem were doing, and what were the implications of that. It was great to see.

Another aspect I really like about this approach is how clearly students see the connections to calculus. I’ve had several students who took calculus last year say they are gaining an appreciation for why calculus is useful, adding a layer of richness to their knowledge. Even better, the students who are currently taking calculus feel like they are taking what they’re learning in calculus straight into physics class.

The big challenge I encountered this week is some of my students are struggling with the demands I’m placing on them. The graphical solutions require them to pay more attention to what is actually happening in the problem than approaches I used in the past. Students also completed some labs and open-ended worksheets that required them to determine an appropriate approach to the problem at hand. As a result, students spend at least some time each class period dealing with confusion or struggling with a concept. I think this is a good thing, but a lot of students find this uncomfortable and wish the class had more direct instruction and traditional worksheets. Students are consistently getting to where I want them to be by the end of the class period, so I think I’m on the right track with the scaffolding I’ve been providing. What’s lacking is a growth mindset a’la Carol Dweck. Its time for me to re-read her book and work on explicitly incorporating strategies in my classroom to help students develop a growth mindset.

Motion Graph Mania

Posted in Physics on September 14, 2014

This week in physics was all about making sense of position vs. time and velocity vs. time graphs. Since I’m planning to have my students use the graphical solutions approach I learned form Kelly O’Shea and Casey Rutherford this summer, motion graphs will need to be second nature to my students. To make sure my students have motion graphs down, I’ve dedicated a lot more time to them than the previous physics teacher did. Based on the progress I saw this week, the time was well spent for most of my students.

The one group that grumbled a bit were my students who took AP Calculus BC last year. I already knew the teacher, Karen Hyers, works very hard to place math content in meaningful contexts and did some work with motion graphs in her calc classes, but I hadn’t really appreciated how thoroughly she covered motion graphs. My students who took calc with her last year easily recalled how to do just about everything I put in front of them this week. Around 1/3 to 1/2 of my students fall into this group, so next year I want to work on some strategies for differentiation to keep the calculus students challenged. The upside is several students commented they didn’t realize how connected physics and calculus really are.

There was also some pretty cool peer instruction that happened thanks to the calculus students. Most groups had at least one calc student, which means someone knew what the answer should be and I didn’t have to worry to much about whether groups would get there. However, because that person was another student, the rest of the group wasn’t afraid to question them and argue a bit before agreeing on the correct answer in ways that don’t often happen when a student is talking to a teacher. These discussions helped many of my students to actually understand the graphs, much preferable to simply memorizing what the graphs for certain cases should look like.

But the best part of the week? A student told me what’s hard about physics so far isn’t the content, its the way I’m making them think about it.

A New School Year Begins

Posted in Physics on September 6, 2014

This week was the first of a new school year. I’m trying to shift my approach this year to make inquiry a central feature of my classroom, pulling ideas from a few different sources, including Modeling Instruction and the 5E model. Whenever someone tries to change things, some aspects will be great and some will have room for improvement.

In the past, the other physics teachers and I have introduced constant speed by measuring the time at set positions for a bowling ball rolled down the hallway. The lab works fairly well, but the logistical issues (including the number of people and the space needed to collect the data) mean that the lab is done as an entire class. I wanted to have each group collect their own data and played with various options using equipment we have. I settled on using ticker tape and dynamics carts. Since this would be their first exposure to ticker tapes, I knew students would need instruction over how to use them. I started with a discussion (borrowing some ideas from Kelly O’Shea) to determine we’d need to measure position and time, then showed students how to use the ticker tape to measure each of those. I wanted students to make some experimental design decisions, so all I added is that students should have a table of their cart’s position and time by the end of the period.

It did not work. I underestimated how much mental effort using the ticker tape would require from my students, so they had a lot of trouble dealing with the other decisions I asked them to make. I also wasn’t explicit that students should make a written note of what they were trying to produce, so a lot of students forgot what they were supposed to do by the time they managed to get a tape with a nice series of marks. During my first hour, I ended up pausing the lab a couple times to give some extra direction when I saw multiple groups struggling with the same issues. In later hours, I provided a lot more structure right from the start, including prompts for students to write down information they would need to reference later. Fortunately, my first hour students were pretty forgiving; I think it helped that I’ve talked to them a bit about the shifts I’m trying to make and why, so they saw where I was coming from.

I was pleased with how the analysis of the lab went, however. I’d hoped to have students try creating a few different types of graphs using Plotly to get at why a scatterplot is the best option for a position vs. time graph, but I wasn’t able to get my hands on a netbook cart, so stuck with a short discussion. My students were able to agree pretty quickly that a scatterplot was the best option and were able to articulate why. Each group then graphed their data and performed a linear regression using either Desmos or the TI graphing calculators most of them have. Groups sketched their graphs on whiteboards, and we had a class discussion looking for similarities and differences in the graphs. Thanks in part to how many students have already taken AP calculus, students were able to pretty easily identify and articulate what on the graphs had physical meaning, which meant I didn’t have to deliver any lecture on constant speed.

Moving forward, I’ll save tools unfamiliar to my students, such as the ticker tape, for labs where the data collection is pretty structured, rather than try and use them for open-ended labs introducing a new topic. This year, that may mean some compromises, such as collecting data as a class or other large group and keeping items (like motorized constant speed buggies) in mind for this spring’s order.

I also want to keep working on how to have effective class discussions. I had several students tell me how much they loved that I lectured less than 10 minutes in the first week and I have every intention of keeping that number as low as I can. In order for students to continue to get the content out of discussions, I need to improve my skills at facilitating them. That will mean lots of reading, lots of formative assessment (to see if my students know their stuff), and lots of reflecting on how discussions went.

All in all, I’m excited about the shifts I’m making. I’ve loved seeing more of my students’ thinking on display this week and they’ve been very engaged so far. There will definitely be more hiccups and missteps, but those are just opportunities to learn.

Why Use Interactive Notebooks

Posted in Notebooks on August 31, 2014

I’ve been thinking a lot about interactive notebooks the past few weeks. In addition to the usual preparations for a new school year, I was asked to co-lead a workshop on interactive notebooks as part of my building’s welcome back week. Preparing for the workshop forced me to articulate why I use interactive notebooks in my classroom and what benefits I have seen.

The idea behind interactive notebooks is to provide a single place where students keep as much of their work as possible, usually with a table of contents to make it easy to locate specific entries. The notebooks are typically structured so that right hand pages contain entries where students gather information, such as data collected during labs, notes, or reading assignments. Left hand pages are then used for students to process information in a variety of ways. Sometimes I use those pages for analysis on a lab. Other times, I have students do a short writing assignment that may be as simple as explaining how a science concept appears in the student’s own experience or may be more complex, such as an assignment I give for students to come up with and explain their own mechanical analog for a circuit.

The most obvious benefit of using interactive notebooks is organization. The simple fact is most students do not have an effective system for keeping track of their work, leading to folders and lockers that are a mess of rumpled papers that make it difficult to locate a specific page when it is needed. With a clear structure for interactive notebooks, I’ve seen some of my most disorganized students manage to keep track of their work.

During the workshop, organization ended up being the focus of our presentation. We’d been asked to give the workshop as part of an effort to make sure every teacher had at least one effective organizational strategy to implement and organization is what first got my co-presenter and me to try interactive notebooks. But, having used notebooks for several years, I would argue that organization is the least interesting benefit. Organization is also what generated the least interest from my colleagues who attended the workshop.

What people were more interested in, and what I’ve found to be the most significant benefit, are the ways interactive notebooks have helped my students to develop a sense of ownership over their learning. With very little effort on my part, I’ve seen a shift in the kinds of questions students ask me. I used to get a lot of students who wanted me to give them a definition, formula, or other piece of factual information previously addressed. Notebooks make it much easier for students to retrieve this information, which means I now spend my time working with students on more meaningful questions.

Using interactive notebooks pushed me to include more writing in my class and shift to assignments that shift to higher-order tasks. I noticed very quickly that these kinds of assignments lead to students organically sharing their work. Its exciting to see my students take so much pride in their work that they can’t wait to show it to their peers or to watch students ask how their classmates approached a task because they are genuinely curious about how someone else approached the task. As I’ve shifted to using more and more open-ended inquiry, I’ve had the privilege of seeing this excitement from my students more and more often.

So far, I’ve only used notebooks with my 9th grade physical science students, but this fall I’m taking over the 12th grade honors physics course. Moving forward, I’m thinking about what I want notebooks to look like with more advanced students. I’m making some significant revisions to the course in an effort to integrate a lot more inquiry and I should be able to give my 12th grade students labs that are more open-ended than I’ve used in the past, especially since the 12th grade course is a full year while the 9th grade course is only 12 weeks. Since lab notebooks are a natural fit for this kind of approach, my current plan is to focus on using notebooks for students to keep a good record of their work in the lab for this year. As the year goes on and I get the hang of the new course, I can be more intentional about the writing students do in their notebooks.